Introduction

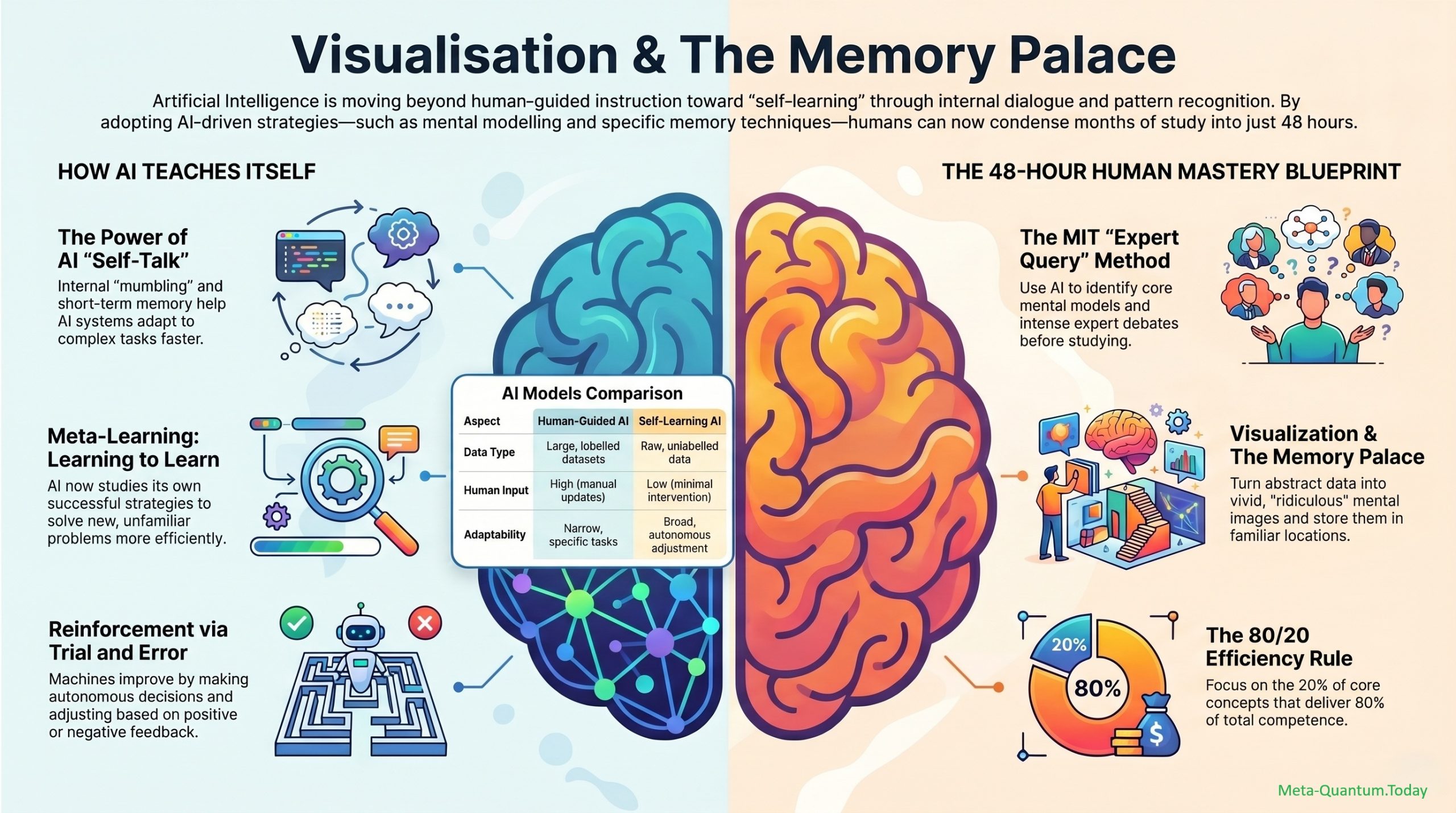

The story of an MIT graduate student who passed a qualifying exam in an unfamiliar subject in just 48 hours by treating AI not as a search engine, but as an “uncompromising private tutor.” Rather than reading textbooks linearly and grinding through practice problems, the student dumped six textbooks, fifteen research papers, and every supplementary document into a single AI workspace and ran a deliberate three-prompt sequence to extract, map, and stress-test the field. The core argument: most people waste AI’s processing power by asking it to summarize chapters; the real leverage comes from engineering a system that processes information for you while forcing your brain to do harder cognitive work.

Features and Concept

The framework rests on three engineered prompts plus a feedback loop, executed inside an isolated AI workspace.

The setup. Gather every PDF, textbook, lecture slide, and paper relevant to the subject into one folder, then drag them into a workspace that is restricted to those sources. This isolation matters — by forcing the AI to reference only your curated academic materials, you eliminate its tendency to hallucinate or pull unverified content from the open web.

Prompt 1 — Extract the scaffolding. “What are the five core mental models or frameworks that all experts in this field agree on?” This phrasing deliberately skips surface trivia and forces the system to isolate the underlying logic — the foundational rules experts converged on through years of work. You inherit the same structural scaffolding professionals use to organize complex information.

Prompt 2 — Map the contested edges. “What are the top three areas where experts argue most fiercely? What are their strongest arguments?” This upgrades your view from a flat table of contents into a three-dimensional map of the discipline, showing where the field is actively evolving and stopping you from blindly accepting established theories.

Prompt 3 — Build the test mechanism. “Create 10 complex questions based on these materials. The requirement: you must be able to tell at a glance whether someone truly understands the concepts or just memorized the textbook.” These are scenario-based problems that demand application of the mental models, not vocabulary recall.

The feedback loop. Submit answers and let the AI cross-reference your documents to flag errors. The strict rule: never ask for the correct answer — instead ask, “Where did I go wrong?” This rerouting is the heart of the method, because it preserves agency and forces repeated exposure of weaknesses rather than passive correction.

Related Sections About the Subjects

Why the prompt phrasing matters

This is essentially advanced prompt engineering applied to learning. As prompt-engineering writeups on Glasp note, prompts can be classified by depth — Level 1 is a simple inquiry, Level 2 adds context and background, and Level 3 demands complex analysis or specific structural output (Glasp: Use Our AI Prompt Kit). The three prompts in the video are deliberately Level 3 — they specify the type of cognitive output (consensus frameworks, contested arguments, discriminating questions) rather than asking for a generic summary. Related techniques like Chain-of-Thought and Tree-of-Thought also work by breaking a large task into structured sub-questions, which is exactly what this framework does for an entire syllabus (Glasp: Harnessing AI Power Locally).

Why the feedback loop works — the science of active recall

The “never ask for the answer, ask where I went wrong” rule maps directly onto decades of cognitive psychology research on retrieval practice. The landmark Roediger & Karpicke (2006) study found that students who used retrieval practice retained 80% more material after a week than students who simply re-read the same passages four times, and Karpicke & Blunt (2011) showed retrieval practice outperformed even concept mapping on inference-based comprehension (Glasp: Active Recall). A Rowland (2014) meta-analysis of 159 studies put the average testing-effect benefit at 0.50 standard deviations, rising to 0.75 for free-recall formats. The MIT student’s loop is essentially industrial-strength retrieval practice — generate questions, attempt them, get error-flagged, attempt again.

Building a personal knowledge stack

This workflow pairs naturally with highlight-and-review tools. The bottleneck in most reading isn’t acquisition but retention — surveys cited on Glasp suggest typical users retain less than 1% of their saved highlights because saving feels like learning but isn’t (Glasp: Spaced Repetition for Readers). Layering spaced review on top of the three-prompt method (revisit the 10 questions after a day, three days, a week) converts a 48-hour sprint into durable long-term knowledge rather than a crammed exam pass.

The shift in skill set

The video’s broader claim — that mastering a subject now depends on engineering precise, demanding questions rather than memorizing faster — echoes a recurring theme in AI-learning writing: the user’s job is to add context, structure, and constraints to elicit higher-quality output, and to use AI as a “rubber duck” that exposes gaps in your own thinking (Glasp: Harnessing the Power of AI).

Conclusion and Key Takeaways

The MIT student didn’t use AI to reduce cognitive load — they used it to increase it. By offloading the search, structuring, and question-generation work to a constrained AI workspace, they freed up six solid hours to spar with the material at the level of mental models and contested arguments. The framework is portable to any subject.

Key takeaways:

- Isolate your sources. Use a workspace like NotebookLM with only your curated PDFs to prevent hallucination and keep the AI grounded.

- Ask for frameworks, not facts. Prompt 1 extracts the five core mental models experts agree on — the scaffolding, not the trivia.

- Map the disagreements. Prompt 2 surfaces the three fiercest expert debates, turning a flat outline into a 3D map of the field.

- Generate discriminating questions. Prompt 3 produces 10 scenario-based problems that separate understanding from memorization.

- Run the loop with strict discipline. Submit answers, ask “where did I go wrong?” — never “what’s the correct answer?” This preserves the retrieval-practice effect that drives long-term retention.

- Engineer cognitive load, don’t avoid it. The tool is for forcing harder engagement, not for shortcutting effort.

- The skill that matters now is writing precise, demanding prompts that expose your own knowledge gaps — not picking the “right” AI interface.

Related References

- Active Recall: The Science-Backed Study Method That Actually Works — Glasp

- Spaced Repetition for Readers: How to Actually Retain Your Highlights — Glasp

- Harnessing AI Power Locally: A Guide to Effective Prompt Engineering — Glasp

- How to Supercharge Your Learning with Spaced Repetition, Active Recall, and Advanced Prompt Techniques — Glasp

- Use Our AI Prompt Kit To Maximize Marketing Results (Three Levels of Prompts) — Glasp

- Harnessing the Power of AI: A Guide to Effective Prompting and Interaction — Glasp

- Roediger, H. L. & Karpicke, J. D. (2006). “Test-Enhanced Learning.”

- Karpicke, J. D. & Blunt, J. R. (2011). “Retrieval Practice Produces More Learning Than Elaborative Studying With Concept Mapping.”