Introduction

This fireside chat features Carina Hong, founder and CEO of Axiom, in conversation with Matt Mcllwain, Managing Director at Madrona Venture Group (an investor in Axiom since day one), at The Montgomery Summit. Carina shares her journey from southern China — where she taught herself English to read the Graduate Texts in Mathematics (GTM) — through MIT (math and physics), Oxford as a Rhodes Scholar, and Stanford, where she dropped out of her concurrent PhD and law degree to start Axiom. Inspired by mathematical geniuses like Galois (who died at 21) and Ramanujan (who died around 30 from malnutrition and ill health), Carina is motivated by a haunting question: what fundamental breakthroughs has humanity missed because we are bounded by the scarcity of outlier reasoning minds? Axiom’s mission is to build superhuman AI reasoners — starting with the world’s first AI Mathematician.

About Axiom and Core Concept

The AI Mathematician

Axiom is building a general AI mathematician — not just a model that solves a narrow class of math problems, but a system that reasons across mathematics broadly. The historical trajectory is striking:

- 2016: Google began applying deep neural networks to mathematics (pre-Transformer, pre-ChatGPT).

- 2019: François Charton and Guillaume Lample (Mistral co-founder) showed Transformers could outperform Mathematica on symbolic integration. Charton is now full-time technical staff at Axiom.

- December 2024 (Putnam Exam): Axiom Prover scored a perfect 120/120 on the Putnam Competition — an exam where the median score among 150,000 math majors is zero, and only five humans have scored a perfect 120 in 98 years. The top human this year (an MIT undergraduate) scored 110. The best LLM (DeepSeek by Mass Arena) scored 103.

- Late January 2025: Axiom Prover independently solved four open research conjectures sent by professors from Tacoma Israeli University, Boston College, and Williams College — problems the professors themselves could not solve. Reported by Will Knight in WIRED.

Verified AI — The Real Product Insight

The crucial differentiator is not just answer-generation but verifiable correctness. Axiom Prover outputs computer programs for mathematical proofs (in Lean 4). If the program compiles and you see a check mark, the logic is 100% verified — you don’t need to be a math professor to trust the output. This positions Axiom not just as a math tool but as the foundation for verification across the entire generative-AI economy (code verification, chip verification, agent verification).

Axiom Math — Complete Guide: Products, Installation, Setup, Configuration & Prover Use Cases

1. Overview — What is Axiom Math?

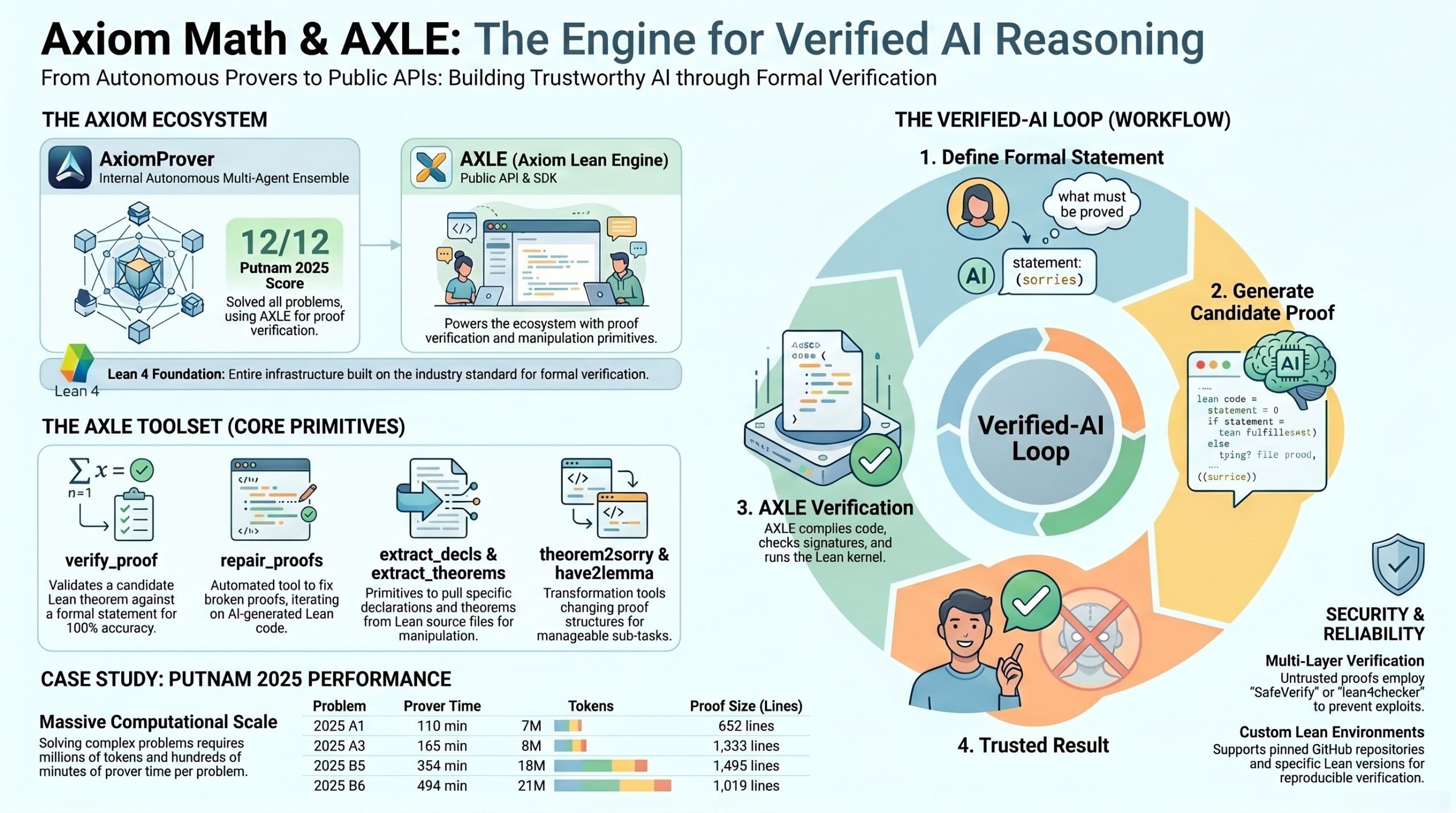

Axiom Math (axiommath.ai) is the Silicon Valley company founded by Karina Hong (featured in the Montgomery Summit fireside chat) building AI mathematicians and verified-AI infrastructure. They ship two distinct products developers should know about:

| Product | What it is | Access |

|---|---|---|

| AxiomProver | Their flagship autonomous multi-agent ensemble theorem prover for Lean 4 — the system that scored 12/12 on Putnam 2025 | Currently internal / partnership-based (not yet a public API). Solutions are open-sourced on GitHub. |

| AXLE (Axiom Lean Engine) | Public API + Python SDK + CLI exposing the proof verification and manipulation primitives Axiom uses internally to train AxiomProver | Public release — sign up at the console for API key |

AXLE is what you can install and use today. AxiomProver is what uses AXLE under the hood.

2. AXLE — The Axiom Lean Engine

AXLE provides interactive tools for exploring, validating, and manipulating Lean 4 mathematical proofs. According to the AXLE homepage, “AXLE provides proof verification and manipulation primitives we’ve used across all of our research efforts, including training AI models and AxiomProver’s 12/12 on Putnam 2025.”

Core toolset (all available via Python, CLI, and HTTP API)

verify_proof— validate a candidate Lean theorem against a formal statementcheck— check if Lean code is validextract_decls/extract_theorems— extract declarations from Lean sourcerename— rename declarationstheorem2lemma/theorem2sorry/sorry2lemma— proof-structure transformationsmerge— merge proof filessimplify_theorems— simplify theorem statementsrepair_proofs— auto-repair broken proofshave2lemma/have2sorry— convert localhavestatementsdisprove— counter-example toolingnormalize— canonicalization

3. Installation

Requirements

- Python 3.11+

- Internet connection (to reach the AXLE API — the Lean compilation runs server-side)

Option A — Install from PyPI (recommended)

pip install axiom-axle

Option B — Install from Source

git clone <https://github.com/AxiomMath/axiom-lean-engine.git>

cd axiom-lean-engine

pip install -e .

Or directly via SSH:

pip install git+ssh://git@github.com/AxiomMath/axiom-lean-engine

Option C — Development Installation (with dev tools + pre-commit hooks)

git clone <https://github.com/AxiomMath/axiom-lean-engine.git>

cd axiom-lean-engine

make setup-env

Verify the install

# CLI check

axle --version

# Python import check

python -c "from axle import AxleClient; print('OK')"

4. Setup — API Key & Authentication

AXLE uses API key auth for rate limiting. Without a key, you get 10 concurrent active requests max.

Step 1: Get an API key

Visit the console: **https://axle.axiommath.ai/app/console**

Step 2: Set it as an environment variable (recommended)

export AXLE_API_KEY=your-api-key

Add to ~/.bashrc, ~/.zshrc, or your project’s .env for persistence.

Step 3 (optional): Pass directly in code

from axle import AxleClient

client = AxleClient(api_key="your-api-key")

5. Configuration

Environment variables

| Variable | Default | Description |

|---|---|---|

AXLE_API_KEY | — | API key for authentication |

AXLE_API_URL | https://axle.axiommath.ai | API server URL |

AXLE_TIMEOUT_SECONDS | 1800 | Base retry-window timeout |

AXLE_MAX_CONCURRENCY | 20 | Max concurrent requests |

export AXLE_API_KEY=your-api-key

export AXLE_API_URL=https://axle.axiommath.ai

export AXLE_TIMEOUT_SECONDS=600

export AXLE_MAX_CONCURRENCY=50

Python client configuration

from axle import AxleClient

# Custom URL, timeout, and concurrency

client = AxleClient(

api_key="your-api-key",

url=AxleClient.DEFAULT_URL,

base_timeout_seconds=600,

max_concurrency=50,

)

CLI configuration (global options)

# Custom API URL

axle --url <https://axle.axiommath.ai> check file.lean --environment lean-4.28.0

# JSON output

axle --json check file.lean --environment lean-4.28.0

# Output to file

axle theorem2sorry input.lean --environment lean-4.28.0 -o output.lean

Lean environments

Every API request requires an environment parameter. AXLE supports multiple Lean versions with pre-built dependencies (typically Mathlib). At time of writing, the recommended starter is lean-4.28.0.

Discover available environments:

# CLI

axle environments

# Python

async with AxleClient() as client:

envs = await client.environments()

for env in envs:

print(f"{env.name}: {env.description}")

# HTTP

curl -s <https://axle.axiommath.ai/v1/environments> | jq

Example environment definitions:

[

{

"name": "lean-4.21.0",

"lean_toolchain": "leanprover/lean4:v4.21.0",

"imports": "import Mathlib",

"description": "Lean 4.21.0 with Mathlib"

},

{

"name": "pnt-4.26.0",

"lean_toolchain": "leanprover/lean4:v4.26.0",

"repo_url": "<https://github.com/AlexKontorovich/PrimeNumberTheoremAnd>",

"imports": "import Mathlib\\nimport PrimeNumberTheoremAnd",

"description": "Lean + Mathlib 4.26.0 with Terence Tao's Prime Number Theorem Project"

}

]

Custom environments can pin a specific GitHub repo + revision + subdir — useful for working with particular formalization projects.

6. Quick Start — First “Hello, Theorem” Check

Python

import asyncio

from axle import AxleClient

async def main():

async with AxleClient() as client:

result = await client.check(

content="import Mathlib\\ntheorem citation_needed : 1 + 1 = 2 := by decide",

environment="lean-4.28.0",

)

print(f"Valid: {result.okay}")

if result.lean_messages.errors:

print("Errors:", result.lean_messages.errors)

asyncio.run(main())

CLI

# From file

axle check mytheorem.lean --environment lean-4.28.0

# From stdin

echo "def meaning_of_life := 42\\n#print meaning_of_life" \\

| axle check - --environment lean-4.28.0

HTTP

curl -s -X POST <https://axle.axiommath.ai/api/v1/check> \\

-H "Authorization: Bearer $AXLE_API_KEY" \\

-H "Content-Type: application/json" \\

-d '{

"content": "import Mathlib\\ntheorem citation_needed : 1 + 1 = 2 := by decide",

"environment": "lean-4.28.0"

}' | jq

Try it in-browser first: an interactive Colab demo notebook is linked from the AXLE Quick Start page — handy for kicking the tires before touching pip.

7. The Core Use Case — verify_proof (the Prover Verification Workflow)

This is the heart of the verified-AI loop Karina described in the fireside chat. The flow:

- You (or your AI agent) write a formal statement with

sorryplaceholders — defining what must be proved. - You produce a candidate proof — the actual Lean code attempting the proof.

- AXLE compiles both, checks signatures match, runs the kernel, and tells you whether the proof is 100% valid.

Conceptual example

-- formal_statement: defines the theorem signature

import Mathlib

theorem add_comm (a b : Nat) : a + b = b + a := by sorry

-- content: provides the actual proof

import Mathlib

theorem add_comm (a b : Nat) : a + b = b + a := Nat.add_comm a b

Python API

result = await axle.verify_proof(

formal_statement="import Mathlib\\ntheorem citation_needed : 1 = 1 := by sorry",

content="import Mathlib\\ntheorem citation_needed : 1 = 1 := rfl",

environment="lean-4.28.0",

permitted_sorries=["helper"], # Optional: helper lemmas allowed to remain unproven

mathlib_options=False, # Optional: enable conventional Mathlib options

ignore_imports=False, # Optional: handle import mismatches

timeout_seconds=120, # Optional: max 900s (15 min) for non-admin

)

print(result.okay) # True if proof is valid

print(result.content) # The processed Lean code

CLI

# Basic

axle verify-proof statement.lean proof.lean --environment lean-4.28.0

# With permitted sorries

axle verify-proof statement.lean proof.lean \\

--permitted-sorries helper1,helper2 \\

--environment lean-4.28.0

# Pipeline-friendly (proof from stdin)

cat proof.lean | axle verify-proof statement.lean - --environment lean-4.28.0

# Strict mode (non-zero exit on invalid) — perfect for CI

if axle verify-proof statement.lean proof.lean --strict --environment lean-4.28.0 > /dev/null; then

echo "Proof valid ✅"

else

echo "Proof invalid ❌"

fi

HTTP

curl -s -X POST <https://axle.axiommath.ai/api/v1/verify_proof> \\

-H "Authorization: Bearer $AXLE_API_KEY" \\

-d '{

"content": "import Mathlib\\ntheorem citation_needed : 1 = 1 := rfl",

"formal_statement": "import Mathlib\\ntheorem citation_needed : 1 = 1 := by sorry",

"environment": "lean-4.28.0"

}' | jq

Output structure

{

"okay": false,

"content": "import Mathlib\\n\\ntheorem foo : 1 = 1 := rfl\\n",

"lean_messages": { "errors": [], "warnings": [], "infos": [] },

"tool_messages": {

"errors": ["Theorem 'foo' does not match expected signature: expected type 2 = 2, got 1 = 1"],

"warnings": [],

"infos": []

},

"failed_declarations": ["foo"],

"timings": { "total_ms": 160, "formal_statement_ms": 3, "declarations_ms": 0, "candidate_ms": 28 }

}

Common verification error patterns

| Pattern | Meaning |

|---|---|

Missing required declaration '{name}' | A symbol in formal_statement is missing from content |

Kind mismatch for '{name}': candidate has {X} but expected {Y} | E.g., theorem vs def |

Theorem '{name}' does not match expected signature | Theorem type changed |

Unsafe/partial function '{name}' detected | Use of a disallowed function |

Axiom '{axiom}' is not in the allowed set of standard axioms | Disallowed axiom used |

Declaration '{name}' uses 'sorry' which is not allowed | Theorem not actually proven |

Candidate uses banned 'open private' command | Disallowed open private |

Important security note

verify_proof trusts the Lean environment for speed. A creative adversary could exploit Lean metaprogramming to make invalid proofs appear valid. For untrusted proofs (e.g., from competitors, untrusted AI outputs), additionally run:

- lean4checker — Lean FRO’s

.oleanverifier - Comparator — Lean FRO’s gold-standard proof judge

- SafeVerify — battle-tested public proof checker (used by AxiomProver in the Putnam 2025 verification pipeline)

8. Real-World Case Study — AxiomProver on Putnam 2025

Their public Putnam 2025 repo is the cleanest case study of the prover-plus-verification stack working end-to-end. Headline numbers from the GitHub README:

- Final score: 12/12 (8 solved during the competition window, the remaining 4 in the days after)

- AxiomProver is described as “an autonomous multi-agent ensemble theorem prover for Lean 4.21.0”

- Each solution is a fully verified Lean 4 proof, checked by SafeVerify

Sample proof complexity (from their published metrics):

| Problem | Prover time | Tokens | Proof size |

|---|---|---|---|

| 2025 A1 | 110 min | 7M | 652 lines, 23 theorems, 561 tactics |

| 2025 A3 | 165 min | 8M | 1,333 lines, 78 theorems, 1,701 tactics |

| 2025 B5 | 354 min | 18M | 1,495 lines, 66 theorems, 1,967 tactics |

| 2025 B6 | 494 min | 21M | 1,019 lines, 30 theorems, 1,052 tactics |

Reproducing the verification yourself:

git clone <https://github.com/AxiomMath/Putnam2025>

cd Putnam2025

lake run verify

# Output:

# Verifying A1 solution ... ✅

# Verifying A2 solution ... ✅

# ... through B6

This is the exact pattern Karina described: AxiomProver generates the proof; a verifier independently confirms it; you trust the check mark, not the model.

9. Practical Patterns for Your Own AI-Verification Pipeline

A reasonable architecture if you want to use AXLE in your own agent loop (mirrors AxiomProver’s design):

┌─────────────────┐ ┌──────────────────┐ ┌─────────────────┐

│ LLM Agent │ ──▶│ Lean Code Gen │ ──▶ │ AXLE check │

│ (Claude/etc.) │ │ (formal proof) │ │ (syntax valid?)│

└─────────────────┘ └──────────────────┘ └────────┬────────┘

▲ │

│ ▼

│ ┌──────────────────┐

│ ┌──────────────────────────┐ │ AXLE verify_proof│

└───────│ Repair / regenerate on │◀────│ (semantic ✅?) │

│ tool_messages.errors │ └────────┬─────────┘

└──────────────────────────┘ │

▼

┌───────────────────┐

│ SafeVerify │

│ (adversarial ✅) │

└───────────────────┘

Tips:

- Use

permitted_sorriesto let your agent ship partial proofs and iterate (very useful in RL training loops with verifiable rewards — exactly the RLVR pattern Karina described). - Use

repair_proofsandsimplify_theoremsas deterministic post-processing to clean up LLM output before verification. - Pin a specific environment (

lean-4.28.0or your custom repo-pinned env) for reproducibility — bumping Lean versions mid-experiment will silently break things. - Set realistic timeouts: hard ceiling is 900s (15 min) per request. AxiomProver’s longest Putnam proof took ~8 hours of wall-clock prover time, but each individual verification call is much shorter.

Video – Axiom Math at The Montgomery Summit

Related Sections

From Deterministic to Non-Deterministic Workflows — Why Verification Matters Now

The world has shifted from deterministic, predictable workflows to non-deterministic LLM outputs — essentially conjectures. Just as math conjectures need a prover, LLM-generated code, designs, and agent actions need a verifier. Carina illustrated the risk with a recent OpenClaw anecdote: a user had OpenClaw book a Davos speaking slot for him, only to discover he owed $31,000 he could not afford. As autonomous agents are deployed at scale into safety-critical and regulated industries, one agent should certify another agent’s actions before they propagate.

The “Why Now” — Three Movements Converging in 2024

- LLMs became genuinely good at informal reasoning — high-level sketching, planning, and outlining (a capability that solidified only after September 2024).

- Lean 4 matured into industry-grade infrastructure. The formal-proof language Lean has been developed since 2019 (notably by undergrads at Imperial College London — many of Carina’s Math Olympiad friends, several now full-time at Axiom). Lean 4’s release in September 2023 enabled engineering-scale work, and the broader mathematical community accepted it in 2024.

- RLVR (Reinforcement Learning with Verifiable Rewards) delivered breakthrough gains in coding because code provides instant pass/fail feedback. Math is the other natively verifiable domain — and it’s exactly where Axiom plays.

Real-World Applications Already Emerging

- Hardware/chip verification: GPUs need 100% correctness — there is no partial credit. Verilog/RTL verification is a natural target.

- CPU hypervisor optimization: AWS recently published 260,000 lines of formal-language code that took world experts 3–5 years to write by hand. Carina notes wryly: “vibe coding has not changed their life.”

- Beyond SMT/SAT solvers: Traditional formal tools suffer from state-space explosion (a counter value of 100 unrolls 100 times). Lean’s mathematical abstractions and invariants let Axiom Prover bypass exhaustive search — a key technical insight.

- Historical precedent: The Paris trade union once demanded formal verification of the subway’s automatic switching system; ESA used formal verification on the Ariane project; Boeing and Airbus have applied it for decades.

The Team — A Magnet for Top Talent

- Fabian Gloeckle — formerly Director at Facebook AI Research, his prior work was deployed across all of Meta for LLM-generated software testing. He champions Donald Knuth’s literate programming vision: programmers reason in natural language while verified code ships directly to deployment.

- Professor Ken Ono — former Vice Provost of the University of Virginia and former Vice President of the American Mathematical Society, who left academia to join Axiom after watching Axiom Prover solve number-theory conjectures he could not solve himself. For Ken, this represents a new way of doing mathematics — mathematicians operate at higher abstraction (intuitions about proof direction) while Axiom Prover fills in the low-level details in real time.

The Abstraction Ladder

Carina draws a parallel to programming history: punch cards (1950s) → Fortran → low-level compilers → Python → natural language. Each lift in abstraction freed humans for higher-leverage work. AI mathematicians do the same for mathematics: instead of solving 1–2 Millennium Prize problems in a lifetime, a mathematician like Ken could potentially attempt a hundred. The Hardy–Littlewood–Ramanujan analogy lands beautifully here — Lean is the modern proof assistant that converts intuition into rigorous logic.

The Code-with-Proof Insight

A key technical contribution: rather than running RL with two divergent objective functions (Python code + English math proof), Axiom converts math proofs into formal-language form that structurally resembles Python or C — making joint optimization tractable. This is why Axiom Prover’s capability transfers directly from math to code verification, saturating several code-verification benchmarks without modification.

Conclusion and Key Takeaways

Axiom is not building “another LLM that does math.” It is building the verification substrate for the entire generative-AI economy — starting with the most rigorous testbed humanity has (mathematics) and generalizing outward to code, chips, and autonomous agents.

Key takeaways:

- Verified AI is the next frontier. As LLMs flood the world with non-deterministic output, the bottleneck shifts from generation to trustworthy verification.

- Math is the proving ground, not the product. Anything reducible to code and logic is a candidate market — chip verification, regulated enterprise code, safety-critical agent operations.

- The “why now” is a triple convergence: LLMs reasoning informally + Lean 4 industrial maturity + RLVR breakthroughs — all aligned in 2024.

- Abstraction lifts unlock human leverage. Mathematicians stop checking nitty-gritty steps and operate on intuition; Axiom Prover handles the formal details.

- Trust enables autonomy. Without verification, high-stakes industries cannot deploy AI agents fully. Axiom is building the agent that certifies other agents.

- Recruitment is a leading indicator. When a former AMS Vice President leaves a Vice Provost role to join an 8-month-old company, the technical signal is strong.

Related References

- Axiom Math home: https://axiommath.ai

- AXLE site: https://axle.axiommath.ai

- AXLE docs: https://axle.axiommath.ai/v1/docs/

- AXLE console (get API key): https://axle.axiommath.ai/app/console

- AXLE GitHub: https://github.com/AxiomMath/axiom-lean-engine

- Putnam 2025 solutions repo: https://github.com/AxiomMath/Putnam2025

- Axiom blog (“Building The Reasoning Engine at Axiom”): https://axiommath.ai/blog/

- SafeVerify (third-party adversarial checker): https://github.com/GasStationManager/SafeVerify

- lean4checker (Lean FRO official verifier): https://github.com/leanprover/lean4checker

- Lean 4 reference on validating proofs: https://lean-lang.org/doc/reference/latest/ValidatingProofs/

- Wall Street Journal coverage of Ken Ono leaving UVA for Axiom

- Putnam Competition — annual undergraduate math exam (median score: 0)

- Lean 4 — formal proof assistant: https://lean-lang.org

- Madrona Venture Group — Axiom’s early investor